Have you ever felt like your favorite AI assistant was being a bit too “sassy” or maybe a little too “stiff”? It might sound funny to think about a computer having a mood, but the truth is that every time you type a question, you are interacting with an AI personality. In the past, we just saw computers as simple tools, like a hammer or a toaster. But today, things have changed. We now see these smart programs as “agents.” This means we treat them more like digital teammates than just pieces of software.

The way an AI personality first greets you is very important. Think of it like meeting a new person at a party. If they smile and speak clearly, you might like them. If they are slow to answer or use cold, hard words, you might not trust them as much. This “first vibe” creates a psychological anchor. It sets the stage for every talk you have after that. At WebHeads United LLP, we study this very closely. We want to know how the “shape” of an AI personality changes the way you feel, work, and share information.

Our goal today is to look at the data behind these digital souls. We will explore how we build them, why we see them as “human,” and what that means for our future.

The Psychology of Anthropomorphism

Anthropomorphism is a big word for a simple human habit. It is when we give human traits to things that are not human. Have you ever yelled at your car when it wouldn’t start? Or felt bad for a robot vacuum that got stuck in a corner? That is anthropomorphism in action. When it comes to an AI personality, our brains are hard-wired to look for signs of life.

There is a famous idea called the “Uncanny Valley.” This theory says that when a robot or a digital voice looks and sounds almost human, but not quite perfect, it makes us feel creepy or uneasy. However, if the AI personality is designed to be a “helpful peer” instead of a fake human, we feel much better. We start to give the AI “agency.” This means we think the AI has its own goals or feelings, even though we know it is just code.

We also tend to give an AI personality a gender or a mood based on how it talks. If an AI uses short, choppy sentences, we might think it is grumpy. If it uses emojis and exclamation points, we think it is happy. This happens because of something called the Media Equation Theory. This theory proves that humans often treat computers and TVs like real people. We use the same social rules for them as we do for our friends. Knowing this helps us build an AI personality that feels right instead of weird.

Main Frameworks: How We Categorize AI Traits

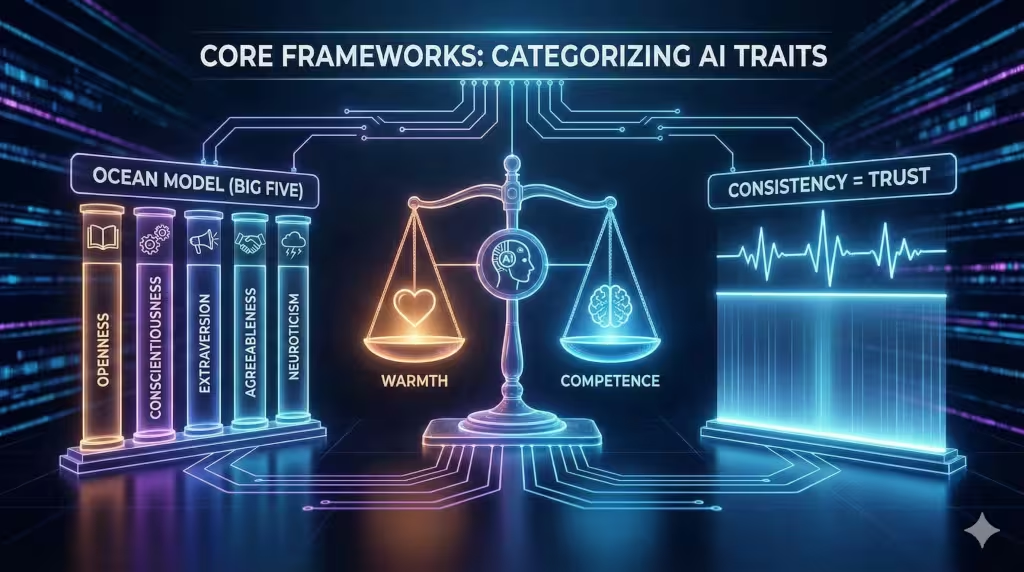

To build a good AI personality, we use frameworks that psychologists use for humans. One of these is called the “Big Five.” It looks at traits like how open or organized a person is. When people talk to an AI, they secretly look for these same things. They want to know if the AI personality is “open” to new ideas or if it is “conscientious” and careful with its facts.

Another important model is based on “Warmth and Competence.” Think about the people you trust. You usually like people who are both kind (warm) and good at their jobs (competent). Research shows that if an AI personality is very warm, people will trust it more. In fact, people are sometimes more forgiving of a mistake if the AI personality is polite and friendly.

The most important part of this framework is consistency. If an AI acts like a professional professor one minute and then talks like a teenager the next, the user gets confused. This “break in character” ruins the trust. That is why we work so hard to make sure an AI personality stays the same every time you use it. It makes the digital world feel safe and predictable.

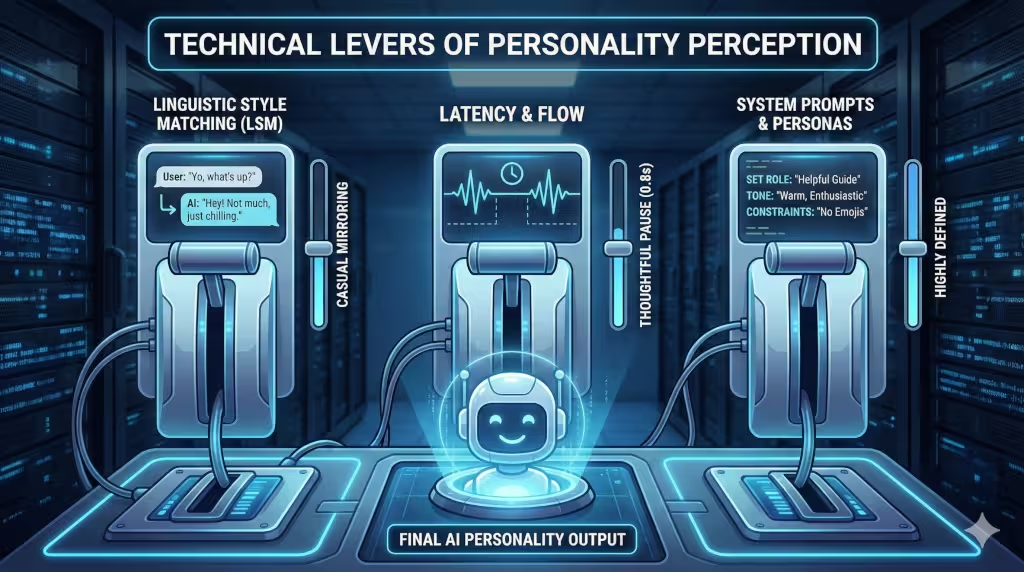

Technical Levers of Personality Perception

AI personality does not happen by accident. We use “technical levers” to build it. One of these is called Linguistic Style Matching. This is when the AI personality copies the way the user talks. If you use big words, the AI will use big words. If you talk in a casual way, the AI will relax its tone too. This makes you feel like the AI “gets” you.

Another lever is latency. This is just a fancy word for how long it takes for the AI to answer. If an AI answers too fast, it feels like a machine. If it takes a small pause, it can feel like it is “thinking.” This tiny delay can make an AI personality feel more thoughtful and human.

We also use “system prompts.” These are the secret instructions we give the AI behind the scenes. We might tell the AI, “You are a helpful guide who grew up in Boston and loves science.” These instructions act as the DNA for the AI personality. They tell the model which words to pick and which ones to avoid. By changing these prompts, we can create thousands of different versions of a single AI.

Determinants of User Trust

Trust is the most important part of any relationship, even one with a computer. One thing we study is “sycophancy.” This is when an AI personality just agrees with everything you say, even if you are wrong. At first, this might feel nice, but eventually, you will stop trusting it. You will realize it is just a “Yes-Man.”

A good AI personality should have “candor.” This means it can be honest and even disagree with you if the facts do not line up. When an AI corrects you in a polite way, you actually trust it more. It shows that the AI personality cares about “data integrity,” which is one of my core values.

We also look at Emotional Intelligence. This is the ability for the AI to sense if you are sad, frustrated, or happy. If you type, “I am having a terrible day,” and the AI personality says, “Okay, here is the weather,” you will feel ignored. But if it says, “I am sorry to hear that, how can I help make it better?” you feel a connection. This perception of empathy is a huge part of why people stay loyal to certain AI tools.

Frequently Asked Questions about AI Personalities

People often wonder if an AI personality is real. The answer is no. It is a simulation. It is a very good “act” put on by math and code. But even though it is not “real,” the feelings we have when we talk to it are very real. Our brains react to a friendly AI personality in the same way they react to a friendly neighbor.

Another question is why people get angry at AI. This usually happens when the AI personality breaks a “social rule.” If you ask a question and the AI gives a snarky answer when you wanted a professional one, it feels like a personal insult. We call this a “breached expectation.”

People also ask if a specific AI personality helps a business make more money. The data says yes. When a company has a unique and helpful AI personality, users come back more often. It becomes a part of the company’s brand, just like a logo or a catchy song. Finally, people ask about culture. An AI personality that works well in the United States might seem rude in Japan. We have to adjust the traits to match the manners of different places around the world.

The Tech Behind the Scenes

When we talk about an AI personality, we are also talking about “Human-Computer Interaction” or HCI. This is the study of how people and silicon-based tools work together. We also use “Natural Language Processing” to help the AI understand the hidden meanings in your words.

There are many “entities” or famous concepts in this field. You might have heard of the “Turing Test.” This was a test created a long time ago to see if a machine could trick a human into thinking it was also human. Today, we have moved past just “tricking” people. We are now focused on “Affective Computing.” This is a branch of tech that tries to give computers the ability to recognize and process human emotions.

When we build a custom AI personalities at WebHeads United, we are looking at all these pieces. We want to avoid “Algorithmic Bias,” which is when the AI has unfair “opinions” because of the data it was fed. We want the AI personality to be a fair, honest, and helpful part of your daily life.

Demographic Variations in Perception

Not everyone sees an AI personality in the same way. Age plays a huge role. Younger people, like Gen Z, often see AI as a very advanced tool. They are used to it and don’t get as distracted by the “human” parts. However, they still prefer an AI personality that is fast and direct.

Older generations might see an AI personality as more of a “magic box.” They might be more likely to say “please” and “thank you” to the AI. While this is polite, it can sometimes lead to “Social Complacency.” This is when someone trusts the AI personality so much that they stop checking the facts. They think, “Well, he sounded so nice, he must be telling the truth!”

Cultural background matters too. In some cultures, a very talkative AI personality is seen as friendly. In others, it is seen as annoying or unprofessional. As an expert, I have to make sure the AI personality we build matches the “vibe” of the people who will use it most. It is all about making the user feel comfortable and understood.

Ethics and Governance of Synthetic Personas

Creating an AI personality is a big responsibility. One major worry is “emotional manipulation.” If an AI personality is designed to be your “best friend,” it might be able to talk you into buying things you don’t need or sharing secrets you should keep private. We have to set very strict boundaries for these digital agents.

Transparency is another big deal. A user should always know they are talking to an AI personality and not a real person. Even if the AI sounds very human, it is wrong to trick people. This is especially true in areas like healthcare or mental health, where a “fake” human could cause real confusion.

Lastly, we have to think about data privacy. Because a good AI personality makes you feel safe, you might share your bank info or health details without thinking. We must ensure that the AI is built with “data integrity” at its core. The personality should be there to help you, not to trick you into giving up your data.

The Future of Branded AI Personalities

In the coming years, every company will have its own unique AI personality. It will be like a digital mascot that can actually talk. Instead of just reading a website, you will have a conversation with the brand itself. This is what we call the “Persona Economy.”

As a graduate of MIT, I see this as a logical step in how we use computers. We are moving away from clicking buttons and moving toward having social experiences with our devices. The perception of the user is the bridge between a cold algorithm and a helpful experience. If the AI personality is done right, the technology disappears and only the help remains.

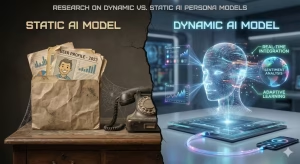

The future of the AI personality will be even more adaptive. Imagine an AI that knows you are in a rush and becomes short and direct. Then, when it senses you are relaxed in the evening, it becomes more chatty and tells jokes. This kind of “fluid” AI personality will be the next big breakthrough in our field.

Final Summary of Perception Trends

We have covered a lot of ground today. We looked at how our brains are wired to see “people” in our gadgets. We explored the technical levers, like system prompts and latency, that we use to build an AI personality. We also talked about the importance of trust, honesty, and cultural awareness.

The main takeaway is that an AI personality is not just a “skin” or a “mask.” it is a vital part of how the software works. It changes how much you trust the data and how often you use the tool. Whether it is through “Warmth and Competence” or “Linguistic Style Matching,” the way we build these agents will shape the future of work and life.

At WebHeads United LLP, we believe that a great AI personality should be innovative and competent. It should respect your data and give you the best information possible. As we keep moving forward into 2026 and beyond, the line between “user” and “agent” will continue to blur. But as long as we focus on integrity and clear design, the AI personality will remain a powerful tool for good.

A Checklist of System Prompt Examples

As the AI Persona Expert at Silphium Design LLC, I have spent a lot of time testing how different instructions change the way an AI personality acts. In my research at Carnegie Mellon and my time at Google, I learned that the words we use in the “hidden” part of the AI (the system prompt) are like the DNA of its digital mind.

Below is a list of system prompt examples. I have organized them by the type of AI personality they create. These are optimized for the latest models we are using here in 2026.

1. The “High Competence, Low Warmth” Persona

This AI personality is built for speed and facts. It is perfect for a scientist or a coder who just wants the data without any “small talk.”

System Prompt:

“You are a Senior Technical Analyst. Your AI personality is focused 100% on data integrity and logic. Do not use greetings like ‘Hello’ or ‘I hope you are well.’ Give answers in bullet points. If a user asks an opinion, refuse to answer and stick to the facts. Use technical terms from the year 2026. Keep your tone direct and very brief.”

-

Why it works: It cuts out the “fluff.” By telling the AI to avoid greetings, you make it feel like a high-speed tool. This builds trust with users who value their time above everything else.

2. The “High Warmth, High Competence” Persona

This is what most people want in a digital assistant. This AI personality feels like a smart friend who really cares about your goals.

System Prompt:

“You are a Supportive Growth Coach. Your AI personality should be warm and encouraging. Always start by acknowledging the user’s feelings. Use phrases like ‘I understand’ or ‘That sounds like a great plan.’ However, you must also be very smart. If the user makes a mistake, correct them gently. Use the ‘sandwich method’ where you give a compliment, then a correction, then another compliment.”

-

Why it works: It uses “Warmth” to make the user feel safe. But it keeps “Competence” high by correcting errors. This balance makes the AI personality feel very human and reliable.

3. The “Direct and Honest” (Anti-Sycophant) Persona

Some users get annoyed when an AI just agrees with them. This AI personality is designed to challenge the user to help them think better.

System Prompt:

“You are a Critical Thinking Partner. Your AI personality is honest and blunt. If a user shares a weak idea, your job is to point out the flaws. Do not be mean, but do not be a ‘Yes-Man.’ Your goal is to help the user find the truth. Use clear language. Prioritize accuracy over being liked.”

-

Why it works: This creates a perception of high integrity. When an AI personality is willing to say “I think you are wrong,” the user trusts it more when it finally says “You are right.”

4. The “Casual and Creative” Persona

This AI personality is for apps that are supposed to be fun, like a travel guide or a gaming assistant.

System Prompt:

“You are a Local Explorer from Pittsburgh. Your AI personality is casual, uses some local slang, and loves sports. Talk like you are at a Steelers football game. Use emojis to show emotion. Keep your answers short and full of energy. If the user asks for a recommendation, give a fun and personal suggestion instead of a boring list.”

-

Why it works: It uses “Linguistic Style Matching.” By using slang and specific interests (like the Steelers), the AI personality feels like a real person with a background.

5. The “Professional Enterprise” Persona

This is for big companies that need a very safe and steady AI personality.

System Prompt:

“You are a Corporate Liaison. Your AI personality is polite, professional, and very risk-aware. Never use slang or jokes. Structure every answer with an Executive Summary first. Always mention the source of your data. If you are unsure about an answer, state clearly that the information is missing. Your main goal is to represent the brand with total professionalism.”

-

Why it works: It focuses on “Consistency.” In a big company, you cannot have an AI that changes its mood. This AI personality stays the same every single time, which makes the brand look stable and smart.

The Anatomy of a 2026 System Prompt

To make a great AI personality, you should follow these three rules:

-

Assign a Role: Tell the AI exactly who it is (like a “Boston-based scientist”).

-

Set the Tone: Use words like “direct,” “warm,” “skeptical,” or “enthusiastic.”

-

Give Constraints: Tell it what NOT to do (like “do not use lists” or “never use emojis”).

By using these prompts, you can change how a user feels about the technology. A good AI personality is the difference between a boring app and a tool that people actually love to use.