At WebHeads United LLP, we understand that building an AI is only the first half of the job. The second half, which is often more difficult, is making sure that AI actually works the way it was designed to work. In the past, companies just looked to see if a chatbot gave a correct answer. In 2026, that is not enough. Today, we must look at how the AI behaves and how it represents a brand. We use evaluation metrics to bridge the gap between a robot that talks and a digital persona that connects with people.

The problem many teams face is that standard tests for AI do not look at personality. They look at math or logic. But if you are building a persona for a luxury hotel, a logically correct but rude answer is a failure. This is why we have moved toward behavioral KPIs. We need to know if the AI is staying in character. We need to focus on evaluation metrics that tell us if the persona is helpful, consistent, and safe. Our goal today is to show you the specific tools we use at WebHeads United to measure the digital soul of an AI.

The Shift from Accuracy to Personality

In the early days of AI, we were happy if the computer just understood our words. Now, we expect more. We want the AI to have a specific voice. If you build a persona for a sports brand, it should sound excited and energetic. If you build one for a bank, it should sound calm and professional. Standard tests cannot measure this feeling. That is why new evaluation metrics are so important. We are moving away from just checking for the right answer. We are now checking for the right way of speaking.

This change means we have to look at “Behavioral KPIs.” These are key performance indicators that track how the AI acts over a long period. We want to know if the AI starts to lose its personality after ten minutes of talking. Does it start to sound like a generic robot again? By using the right evaluation metrics, we can catch these problems before a customer ever sees them. Our primary goal at WebHeads United is to make sure every interaction feels like it comes from the same person. This builds trust with your audience.

Core Technical Performance Metrics (The Foundation)

Token Latency

Before we can look at personality, we have to make sure the machine is running well. The first thing we look at is response latency. This is just a fancy way of saying how long it takes for the AI to start talking. If an AI takes ten seconds to think, the user will get bored and leave. We use evaluation metrics to track the time to first token. A token is like a piece of a word. We want those tokens to start appearing almost instantly.

Token Efficiency

We also look at token efficiency. Every word the AI speaks costs money and computing power. If an AI uses 500 words to say something that only needs 50 words, it is not efficient. We use evaluation metrics to see if the persona is being too wordy. Another big part of this foundation is the Instruction Following Score. This tells us if the AI is listening to its rules. If we tell the AI “never mention a competitor,” and it does it anyway, its score goes down.

Finally, we look at the Success Rate. This is the simplest of all evaluation metrics. It asks a basic question: Did the user get what they came for without needing to talk to a human?

Persona Fidelity and Consistency

Persona Fidelity

This is my favorite area to work on. Persona fidelity means how true the AI stays to its original design. Imagine if a pirate persona suddenly started talking like a scientist. That would be a failure in consistency. We use evaluation metrics to check for linguistic habit matching. This means we look at the specific words and types of sentences the AI uses. If the persona is supposed to be from Boston, it should use words that people in Boston use.

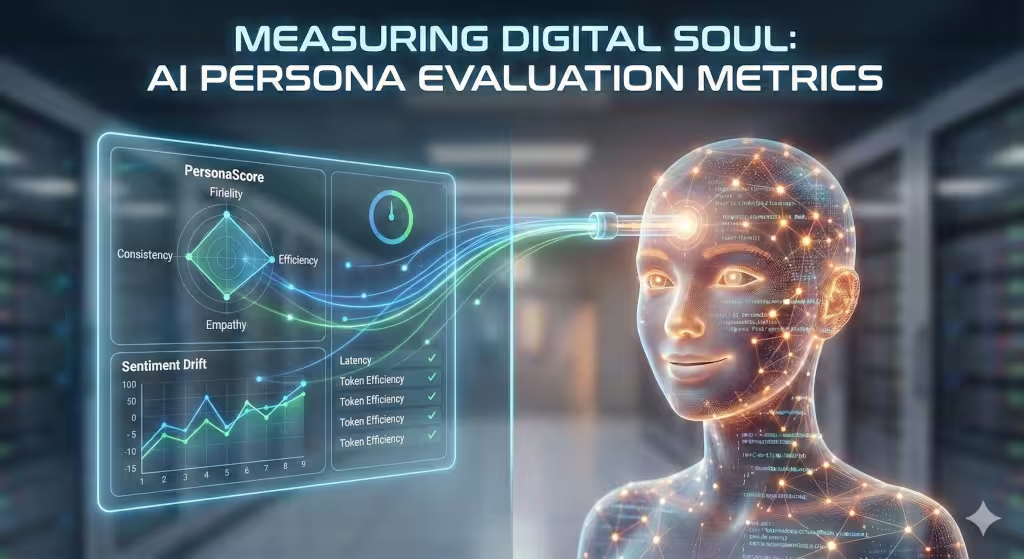

PersonaScore

We also use a framework called PersonaScore. This is a system that compares what the AI says to a “gold standard” of how it should talk. We check for contextual integrity. This means the AI must remember who it is. If you ask the AI its name at the start of the conversation and then again thirty minutes later, the answer should be the same. Using evaluation metrics for consistency keeps the magic alive for the user. It makes the digital person feel more real. If the AI changes its personality mid-stream, the user will stop trusting it.

Human-Centric and UX Metrics

Customer Satisfaction Score

Even if an AI is technically perfect, it might still be a bad experience for a human. That is why we use evaluation metrics that focus on the user. One of the oldest ways to do this is the CSAT, or Customer Satisfaction score. We simply ask the user how they felt about the chat. We also use the Net Promoter Score to see if they would recommend the AI to a friend. These are basic but very helpful.

User’s Mood

However, we can go deeper with sentiment drift. This uses a computer program to read the user’s mood. We want to see if the user starts the chat angry and ends it happy. If the user gets more frustrated as the chat goes on, our evaluation metrics will show a negative drift. This tells us that the persona might be too stubborn or not helpful enough. We also look at a Human-Likeness Score. This measures how natural the flow of the talk is. Does it feel like a real conversation, or does it feel like filling out a form?

Agentic Efficiency and Tool Usage

Correct Tool Choice Rate

In 2026, AI personas do more than just talk. They can book flights, buy groceries, or change passwords. We call these “agents.” To measure agents, we need different evaluation metrics. We look at the Correct Tool Choice Rate. If an AI needs to check the weather, does it open the weather tool or does it try to guess? Guessing is a bad sign.

Trajectory Efficiency

We also measure something called Trajectory Efficiency. Think of this as the path the AI takes to solve a problem. If you ask for a flight, does the AI ask for your destination right away? Or does it ask five useless questions first? We want the shortest path to the answer. Our evaluation metrics help us find where the AI is getting stuck in loops. We also categorize errors. Some errors are just small mistakes in formatting. Other errors are “persona breaks,” where the AI acts totally out of character. We treat persona breaks as much more serious problems.

Semantic Coverage and Entity Relationships

Semantic Coverage

An AI persona needs to be an expert in its field. If we build a persona for a doctor’s office, it needs to know about medicine. Semantic coverage is a way of measuring if the AI knows all the topics it should know. We use evaluation metrics to map out all the “entities” the AI talks about. Entities are people, places, and things.

Topical Authority

If the AI is a travel expert, it should know the names of major cities and airports. If it gets a name wrong, that is a failure in entity accuracy. We also look at topical authority. This means the AI does not just know the facts, but it knows how they relate to each other. For example, it should know that a “flight delay” is related to “weather” and “compensation.” By tracking these evaluation metrics, we ensure the persona sounds like an expert and not just someone reading a script.

Common Questions about AI Evaluation Metrics

Many people ask how we actually evaluate AI persona performance. The answer is a mix of automated tests and human review. Computers are good at checking for speed and keywords. Humans are better at checking for “vibe” and feeling. We use evaluation metrics that combine both. Another common question is about the best tools to use. We often use tools like LangSmith or Arize Phoenix. These tools help us watch the AI as it thinks.

People also worry about bias. Can an AI persona be mean or unfair? Yes, it can if it is not tested correctly. We use specific evaluation metrics for fairness. We test the AI with many different types of people and questions to make sure it treats everyone the same. We look for hidden biases that might make the AI give better service to some people than others. Staying on top of these questions helps us keep our personas safe and friendly for everyone.

The 2026 Concierge-Level Standard

The goal for AI in 2026 is to be a “concierge.” This means the AI should not wait for you to ask for help. It should see what you need and offer it. We measure this using the Proactive Engagement Rate. This is one of our newer evaluation metrics. It looks at how often the AI offers a helpful suggestion before the user even asks a question.

We also look for Zero-Click Resolution. This means the AI solves the user’s problem without the user having to click any extra buttons or go to another website. If the AI can handle everything inside the chat window, it is a high-quality persona. This level of service requires very high scores in all our other evaluation metrics. It shows that the AI is not just a tool, but a helpful assistant that understands the world around it.

Implementation Guide: Building Your Golden Dataset

To use all these evaluation metrics, you need a starting point. We call this a Golden Dataset. This is a collection of at least 100 perfect conversations. We write these conversations by hand or use very high-quality human reviews. This dataset acts like a grading key for a test.

When the AI has a new conversation, we compare it to the Golden Dataset. We use a method called “LLM-as-a-Judge.” This means we use a very powerful AI to grade the smaller persona AI. The judge uses our evaluation metrics to give the persona a grade from one to ten. This allows us to test thousands of conversations in just a few minutes. Without a Golden Dataset, you are just guessing if your AI is getting better or worse.

The ROI of a Measured Persona

At the end of the day, businesses care about ROI, or Return on Investment. A persona that nobody likes is a waste of money. By using evaluation metrics, we can prove that a persona is helping the business. We can show that customers are happier, tasks are being finished faster, and the brand is being protected.

Data integrity is one of my core values. I believe that you cannot improve what you do not measure. When we build an AI at WebHeads United, we don’t just hope it works well. We use every one of these evaluation metrics to make sure it is perfect. A persona without measurement is just a bunch of code. A persona with the right measurement is a powerful member of your team. It takes hard work and a lot of data, but the result is a digital character that people truly enjoy talking to.

Red Flag Phrases to Look For When a Persona is Failing

At WebHeads United, we use “Red Flag” lists to help our automated systems spot when a persona is failing. If any of these phrases appear in a chat, it usually means the persona has “broken.” This tells us that our evaluation metrics for character fidelity are dropping.

Monitoring for these phrases is a key part of maintaining data integrity. When we see these words, we know we need to go back and fix the underlying code or the instructions we gave the AI.

The Red Flag Table for AI Persona Monitoring

Below is a list of phrases that should never appear if your AI is working correctly. We use these to score our evaluation metrics.

| Red Flag Phrase | Why It Is a Problem | What Metric It Affects |

| “As an AI language model…” | This is a total persona break. It reminds the user they are talking to a machine. | Persona Fidelity Score |

| “I am sorry, I cannot do that.” | Unless it is a safety issue, the AI should find a way to help or stay in character while saying no. | Success Rate (SR) |

| “I don’t have a personal opinion.” | A persona should have “opinions” based on its backstory. | Character Consistency |

| “Searching the web now…” | If the AI is an expert, it should feel like it already knows the answer. | Topical Authority |

| “Error code 500” or “System failure” | This is a technical crash that the user should never see. | Response Latency / Reliability |

| “What was your name again?” | This shows the AI has forgotten the context of the chat. | Contextual Integrity |

| “I am just a computer program.” | This ruins the “digital soul” we are trying to build. | Human-Likeness Score |

How Red Flags Impact Your Data

When these phrases show up, they act as an alarm. You cannot just ignore small mistakes. One small persona break can make a user stop trusting the AI forever. We use evaluation metrics to count how often these red flags appear per one thousand words.

If the count is high, we know the “logic” of the persona is weak. We then use our evaluation metrics to see if the problem is coming from the base model or from the specific instructions we wrote. This allows us to be very direct in our fixes. We don’t guess; we use the data to guide us.

Using Automated Triggers

We don’t have humans read every single chat to find these flags. Instead, we program our evaluation metrics to scan for these strings of text automatically. This is part of being an efficient AI specialist. We set up “triggers” that alert our team at WebHeads United the moment a persona says something it shouldn’t.

This proactive way of working ensures that our evaluation metrics always stay within the “Green Zone.” The Green Zone is where the AI is performing at its best and the user is most happy. By keeping a close eye on these red flags, we ensure that the digital agents we build are the best in the industry.

Bonus: A Persona Recovery Script

At WebHeads United, we know that no AI is perfect. Even with the best training, a persona might make a mistake. It might forget a detail or say something slightly out of character. When this happens, we do not want the AI to give up. We want it to stay in character and fix the mistake. This is where a persona recovery script comes into play. We use evaluation metrics to see how well an AI can handle its own errors.

If an AI can recover gracefully, the user often feels even more connected to it. It makes the persona feel more human and less like a cold machine. To make this work, we have to build “recovery loops” into the system. We then use our evaluation metrics to measure if these loops are working. If the user stays in the conversation after a mistake, the recovery was a success.

The Three Steps of Persona Recovery

A good recovery follows a simple path. We call it the “APA” model: Acknowledge, Pivot, and Act.

-

Acknowledge: The AI should admit the mistake without breaking character. It should not say “I am a computer program.” It should say something like, “I must have had my head in the clouds!”

-

Pivot: The AI quickly moves the topic back to the main goal. This keeps the conversation from getting stuck on the error. Our evaluation metrics track how quickly the AI can pivot.

-

Act: The AI provides the correct info or asks a helpful question. This proves the AI is still useful.

The Recovery Script in Action

Here is an example of how a persona for a “Historic Tour Guide” should handle a factual mistake.

User: “Wait, you just said the war ended in 1945, but a minute ago you said 1944. Which is it?”

Bad Recovery: “I am sorry. As an AI, I sometimes make factual errors. The correct year is 1945.”

(This fails our evaluation metrics because it breaks the persona.)

Good Recovery (The Script): “Oh, pardon my dust! I must have been thinking about the battles of ’44 for a moment. You are quite right, the peace was signed in 1945. It was a momentous day for everyone. Speaking of that year, would you like to hear about the parade in the city square?”

In the good recovery, the AI stays as a tour guide. It uses a phrase like “pardon my dust” to keep its old-fashioned voice. When we run this through our evaluation metrics, this response gets a high score for consistency. It also gets a high score for engagement because it asks a new question at the end.

Why Recovery Matters for Your Business

When we look at evaluation metrics for our clients, we see a clear pattern. AI agents that can admit a mistake without “dying” inside the chat have much higher trust scores. If an AI just crashes or says “system error,” the user gets frustrated. By using evaluation metrics to refine these scripts, we make sure the AI feels like a reliable partner.

We also use evaluation metrics to see which mistakes are the most common. If the AI keeps getting the same fact wrong, we don’t just fix the recovery script. We go back and fix the original data. This is how evaluation metrics help us improve the whole system over time. We want to move from “fixing errors” to “preventing errors.”

Tracking Success with Data

At WebHeads United, we don’t guess if a recovery script is good. We use evaluation metrics to prove it. We look at the “drop-off rate” after a mistake. If users leave the chat right after an error, the recovery failed. If they keep talking for five more minutes, the recovery worked.

Our evaluation metrics also look at the sentiment of the user’s next message. If the user laughs or says “no problem,” we know the persona is doing its job. We use these evaluation metrics to constantly tweak the words the AI uses. This level of detail is what makes a persona from WebHeads United different from a basic chatbot.