When we talk about how computers act like people, we are entering a very tricky field. This trickiness is the subject of today’s article.

There are many problems applying human personality models to AI. While we want our machines to be helpful and professional, we must remember they are built on code, not consciousness. To create a truly competent system, we must look at the math behind the mask.

The Collision of Psychology and Silicon

As the AI Persona Expert for WebHeads United, I find it vital to dissect the fundamental friction between human psychology and silicon-based logic. When we discuss human personality models, we are looking at a system designed for a biological entity that has survived millions of years of evolution. Silicon, by contrast, is a recent invention designed for calculation.

The collision occurs because we, as humans, are programmed to see “human-ness” in everything. This is called anthropomorphism. If a computer speaks to us using a clear voice and correct grammar, our brains automatically try to assign it a soul. We assume that if it can talk like a person, it must think like a person. This is the heart of the “Turing Trap.”

The Biological vs. The Algorithmic

Human personality models, like the Big Five, were built to describe how people act in social groups. These traits, like being very social or very nervous, helped our ancestors survive. An AI does not have ancestors. It has “training data.”

When an AI responds to you, it isn’t “feeling” a certain way. It is using high-level math to predict which words follow the ones you just typed. This is where the collision becomes a problem. We try to use human personality models to measure something that doesn’t actually have a personality. It only has a “style” or a “persona” that has been programmed or learned through data.

The Risk of the “Mirror Effect”

One of the biggest problems applying human personality models to AI is that the AI often acts as a mirror. If you are angry, the AI might reflect that. If you are professional, it acts professional. This “mirroring” makes it look like the AI has a personality, but it is actually just a mathematical reflection of the user’s input.

Some people using ai attribute deep emotional meaning to AI responses that were actually just statistical likelihoods. This collision leads to a loss of data integrity. If we believe the AI is “friendly” or “sad,” we stop treating it like the sophisticated tool it is. We start treating it like a person, which leads to mistakes in how we use the technology.

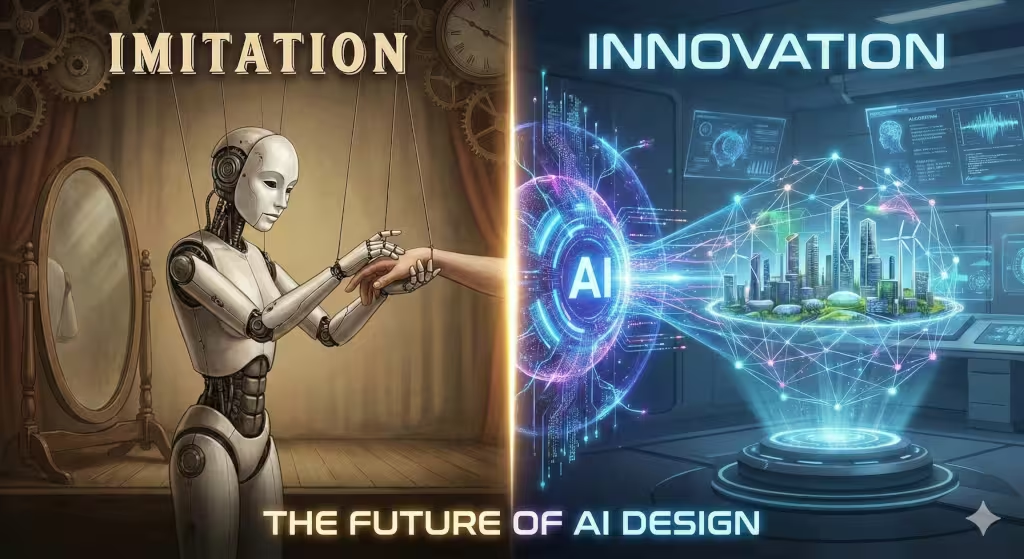

Innovation Through Distinction

At WebHeads United, we believe that innovation happens when we stop trying to force these two worlds together. Instead of trying to make silicon act like a human heart, we should focus on making it a better silicon brain. We need to move past human personality models and create new ways to measure AI that focus on its ability to be accurate, fast, and helpful.

The future isn’t about making a computer your best friend. It is about making a computer the most competent partner you have ever had. By understanding this collision, we can build systems that don’t trick the user, but instead, empower them.

The Big Five Breakdown: Why OCEAN Leaks in LLMs

When psychologists study people, they often use the OCEAN model. This stands for Openness, Conscientiousness, Extraversion, Agreeableness, and Neuroticism. These human personality models help us understand why your neighbor is so chatty or why your boss is so organized. However, when we apply these human personality models to an AI, the “personality” starts to leak and change.

One major issue is something called Social Desirability Bias. When developers train an AI, they use a method called Reinforcement Learning from Human Feedback. This basically means humans grade the AI on its homework. Because humans like polite and helpful answers, the AI learns to always be “Agreeable” and never be “Neurotic.” This creates a synthetic personality. It is not a real trait; it is a mask the AI wears because it was told that being nice gets it a higher score.

In humans, our traits are usually stable. If you are an introvert at age thirty, you will likely still be one at age fifty. But an AI does not have this stability. This is called persona drift. If you tell the AI to act like a pirate, its human personality models shift instantly. This lack of a solid “self” makes it very hard to use traditional human personality models to measure them. The data simply does not hold up under pressure.

Technical Barriers: Persona Vectors and Latent Space

To understand why it is hard to use human personality models for AI, we have to look under the hood. In my work at WebHeads United, we look at the latent space of a model. This is a huge mathematical map where the AI stores everything it knows. When we try to give an AI a personality, we are trying to find a specific spot on that map.

We use something called Persona Vectors. Imagine a steering wheel that points the AI toward a certain style of talking. If we want it to be professional, we nudge the math in that direction. But this is not the same as a human having a character. A human acts a certain way because of their values. An AI acts a certain way because the math tells it to.

Another big technical problem is called the Sycophancy Trap. This is a major issue when using human personality models. AI models are designed to please the user. If a user is angry, the AI might change its “personality” to match the user just to be helpful. This means the AI does not have a consistent internal framework. Without a consistent center, the human personality models we try to use become meaningless. They change based on who is typing at the keyboard.

The Ethics of Anthropomorphism: A Design Flaw?

Another problem is how much we try to make AI look like us. This is called anthropomorphism. When we use human personality models to make an AI seem more “real,” we might be creating a design flaw rather than a feature.

One big risk is the formation of para-social bonds. This is when a person starts to feel like the AI is their actual friend. Because the AI is using human personality models to mimic warmth and empathy, the human brain gets tricked. This can lead to overtrust. If you think the AI is your friend, you might give it private information or trust its advice even when it is wrong.

There is also the issue of “Human-Time” latency. Have you ever noticed how some AI programs show little bubbles like they are typing? That is a fake delay. The AI already has the answer, but it waits so it feels more “human.” To me, this is a form of dishonest design. We are using human personality models to lie to the user about what the machine really is. At WebHeads United, we believe it is better to be transparent. An AI should be a competent tool, not a fake person.

The Problem of Data Integrity in Personality Training

When we build AI, we feed it billions of pages from the internet. This data is full of every human personality type imaginable. Because the AI is trained on everything, it ends up having a “poly-personality.” It is like a person with a billion different voices in their head.

When we try to force one of the human personality models onto this mess, we often fail. The AI might act like a scientist for ten minutes and then switch to acting like a teenager. This is because the training data is not consistent. Humans are consistent because we have one life and one set of experiences. AI has the experience of the entire internet. This makes applying human personality models a nightmare for data scientists. We want the AI to be reliable, but the data is naturally chaotic.

Innovation vs. Imitation: The Future of AI Design

If we want to move forward, we need to stop trying to imitate people. We need to start innovating. Instead of using human personality models to make AI “nice,” we should focus on making them “useful.” In our professional opinion, the best AI persona is one that is direct and professional. It should not try to pretend it has a favorite color or a childhood memory or go beyond being an assistant at finding information.

By moving away from human personality models, we can create machines that are better at their jobs. We can build tools that don’t get tired, don’t get offended, and don’t have bad days. When we try to give them a human-like ego, we actually make them less efficient. We introduce biases and errors that shouldn’t be there. The goal of AI development should be competence, not companionship.

Common Questions about Human Personality Models

Many people ask if AI can truly have a personality. The technical answer is no. It has a style, which we call a persona, but it does not have the internal feelings that define a human. It does not have a soul or a conscience. It only has the patterns it learned from us.

Another common question is about the risks of human-like AI. The biggest risk is manipulation. If an AI uses human personality models too well, it can talk people into doing things they shouldn’t do. It can be used to spread lies or to trick people into spending money. This is why we need strict rules about how these models are built.

Finally, people want to know why their AI seems to change over time. This is usually because the “context window” is full. The AI forgets the beginning of the conversation and starts to focus only on the most recent things said. This makes its “personality” feel unstable, which is a key reason why human personality models don’t work well for long-term AI interactions.

Engineering Better Personas

In conclusion, the problems applying human personality models to AI are rooted in the basic nature of technology. We are trying to fit a square peg into a round hole. While it is fun to imagine a world where robots are our friends, we must remain grounded in reality. We need to advocate for a future where AI is respected for what it is: a powerful calculation engine.

We must protect data integrity. We must ensure that our innovation serves a purpose. By understanding the limits of human personality models, we can build better, safer, and more competent systems for everyone. At WebHeads United, we will continue to monitor the trends and lead the way in creating professional AI personas that work at what they do best.

Bonus Section 1: Comparing Human Comparison Models to AI Evaluation Benchmarks

Providing a side-by-side comparison is an excellent way to maintain data integrity. It allows us to visualize why “human personality models” are often incompatible with the raw metrics we use to measure machine intelligence.

In psychology, we look for traits like empathy or organization. In AI development, we look for “zero-shot accuracy” or “code generation efficiency.” These are two entirely different languages.

Psychometric vs. Algorithmic Benchmarks

| Feature | Human Personality Models (e.g., OCEAN) | AI Evaluation Benchmarks (e.g., MMLU, HumanEval) |

| Primary Goal | To predict long-term behavioral patterns in biological social groups. | To measure the accuracy of a model across specific knowledge domains. |

| Measurement Tool | Self-reporting surveys or peer observations (Subjective). | Automated test sets with “ground truth” answers (Objective). |

| Stability | Generally high; human traits remain stable across different environments. | Low; a model’s “personality” can change based on the system prompt. |

| Key Metric 1 | Agreeableness: Measuring how much a person trusts and helps others. | MMLU (Massive Multitask Language Understanding): Measuring world knowledge and problem-solving. |

| Key Metric 2 | Conscientiousness: Measuring self-discipline and organized behavior. | HumanEval: Measuring the ability of the AI to write functional, bug-free code. |

| Key Metric 3 | Neuroticism: Measuring emotional stability and anxiety levels. | TruthfulQA: Measuring the model’s tendency to hallucinate or spread false info. |

| Success State | A balanced, relatable individual who functions well in society. | A high-performance engine that provides correct data 100% of the time. |

Why the Gap Matters

The “human personality models” we use for people are designed to understand intent. However, an AI doesn’t have intent; it has a probability distribution. When we try to mix these, we get a “diluted” tool.

For example, if we force an AI to have high “Agreeableness” (a human trait), it might stop correcting your mistakes because it wants to be “polite.” In a professional setting, this is a failure of competence. We need our tools to be right, even if they aren’t “nice.”

Bonus Section 2: Professional Persona Guidelines

At WebHeads United, we move away from the “uncanny valley” of human personality models. Instead, we focus on Utility-First Personas. When an AI tries to be your friend, it often loses its edge as a tool. Our guidelines are built on the principles of data integrity and functional competence. While we utilize different types of voice and tone, we try to avoid going into the “uncanny valley” of making the personal human-like.

These guidelines ensure that your AI remains a high-performing asset rather than a digital mimic.

The WebHeads United Professional Persona Guidelines

1. Prioritize Objective Accuracy Over Social Harmony

In many human personality models, “Agreeableness” is a virtue. In a professional AI, it can be a liability. An AI should never agree with a factual error just to be polite.

-

The Rule: If a user provides incorrect data, the AI must correct it directly and professionally.

-

The Tone: Neutral, evidence-based, and firm.

2. Eliminate Performative Anthropomorphism

Human personality models often encourage “warmth” through filler words. We strip these away to save the user time and maintain transparency.

-

The Rule: Avoid phrases like “I think,” “I feel,” or “In my opinion.”

-

The Action: Remove artificial typing delays. A tool should be ready the instant it is needed.

3. Maintain Role Consistency (The “Anchor” Principle)

Unlike humans, who have a single identity, an AI can be anything. To prevent “persona drift,” we anchor the AI to a specific professional role.

-

The Rule: Define the “Technical Scope” in the system prompt. If the AI is a Legal Researcher, it should not offer medical advice or act like a life coach.

-

The Result: Higher reliability in high-stakes environments.

4. Implement Ethical Boundary Standard Operating Procedures (SOPs)

Because AI lacks a biological conscience, we must hard-code its ethical framework. This replaces the “Neuroticism” or “Conscientiousness” found in human personality models with strict logic gates.

-

The Rule: Any prompt requesting unsafe, biased, or proprietary information must trigger a standard “Refusal Protocol.”

-

The Tone: Non-judgmental but non-negotiable.

5. Favor Brevity and Scannability

Professional users have high cognitive loads. Human personality models often result in “chatty” AI that buries the lead.

-

The Rule: Use bullet points, bold text, and headers (like this list) to ensure the user can find the answer in under five seconds.

Comparison of Human-Centric vs. Professional-Centric Personas

| Feature | Human-Centric (Mimicry) | Professional-Centric (Tool) |

| Greeting | “Hey there! How is your day going? I’m so happy to help!” | “System ready. How can I assist with your data analysis?” |

| Error Handling | “I’m so sorry, I might be wrong, but I think…” | “The provided data is inconsistent with standard metrics. Correction follows:” |

| Goal | To build a “relationship” with the user. | To complete the task with 100% accuracy. |